Apache Kafka and Apache Flink are two popular open-source tools that can be used for real-time data streaming and processing. While they share some similarities, there are also significant differences between them. In this blog tutorial, we will compare Apache Kafka and Apache Flink to help you understand which tool may be best suited for your needs.

What is Apache Kafka?

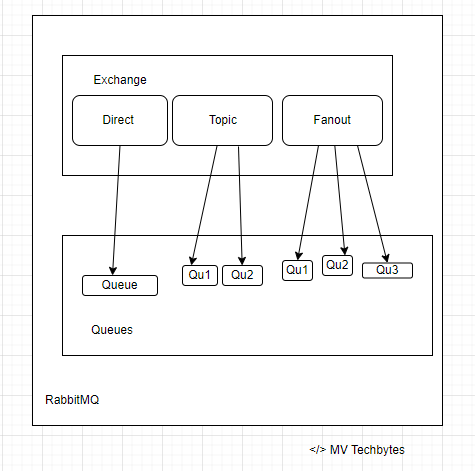

Apache Kafka is a distributed streaming platform that is designed to handle high-volume data streams in real-time. Kafka is a publish-subscribe messaging system that allows data producers to send data to a central broker, which then distributes the data to data consumers. Kafka is designed to be scalable, fault-tolerant, and durable, and it can handle large volumes of data without sacrificing performance.

What is Apache Flink?

Apache Flink is an open-source, distributed stream processing framework that is designed to process large amounts of data in real-time. Flink uses a stream processing model, which means that it processes data as it comes in, rather than waiting for all the data to arrive before processing it. Flink is designed to be fault-tolerant and scalable, and it can handle both batch and stream processing workloads.

Comparison of Apache Kafka and Apache Flink Here are some of the key differences between Apache Kafka and Apache Flink:

- Data processing model Apache Kafka is primarily a messaging system that is used for data transport and storage. While Kafka does provide some basic processing capabilities, its primary focus is on data transport. Apache Flink, on the other hand, is a full-fledged stream processing framework that is designed for data processing.

- Processing speed Apache Kafka is designed to handle high-volume data streams in real-time, but it does not provide any built-in processing capabilities. Apache Flink, on the other hand, is designed specifically for real-time data processing, and it can process data as it comes in, without waiting for all the data to arrive.

- Fault tolerance Both Apache Kafka and Apache Flink are designed to be fault-tolerant. Apache Kafka uses replication to ensure that data is not lost if a broker fails, while Apache Flink uses checkpointing to ensure that data is not lost if a task fails.

- Scalability Both Apache Kafka and Apache Flink are designed to be scalable. Apache Kafka can be scaled horizontally by adding more brokers to the cluster, while Apache Flink can be scaled horizontally by adding more nodes to the cluster.

- Use cases Apache Kafka is commonly used for data transport and storage in real-time applications, such as log aggregation, metrics collection, and messaging. Apache Flink is commonly used for real-time data processing, such as stream analytics, fraud detection, and real-time recommendations.

Conclusion:

Apache Kafka and Apache Flink are both powerful tools that can be used for real-time data streaming and processing. Apache Kafka is primarily a messaging system that is used for data transport and storage, while Apache Flink is a full-fledged stream processing framework that is designed for data processing. Both tools are designed to be fault-tolerant and scalable, but they have different use cases. If you need a messaging system for data transport and storage, Apache Kafka may be the better choice. If you need a full-fledged stream processing framework for real-time data processing, Apache Flink may be the better choice.